Posted in Teams, People & Culture, Artificial Intelligence.

The team as we knew it no longer exists

Written by ETB - Empowerment Team Berlin on .

AI as a team member boosts performance—and shakes up what it means to be human in a team.

A normal regular meeting. Almost.

It's 9:30 a.m. Five people have gathered for a video conference: two in Frankfurt, one in Singapore, and one on a commuter train somewhere between Munich and Augsburg. This is the usual sight of a modern team: distributed, international, hybrid. But this morning, there is someone else there. No person, no camera, no microphone. And this participant worked through the night while the others slept.

It evaluated the minutes of the last meeting, compared three market analyses, created a decision template, and ran through three scenarios – including risk assessment. It's name is in the calendar like every other team member's: "Strategic Horizon, Strategy Agent," simply called Hori by many.

CEO Marie asks, "Have you read Hori's forecast?" Everyone nods. "We really need to take action. Let's go through the options that Hori has generated."

Teams that we have never seen before are emerging everywhere, not in future labs, but in average companies and ordinary meetings.

We ourselves are in a real-time lab and can observe how quickly and fundamentally our working world is changing. And it is far from clear what it means to be in a team in which not all members are human. That can sometimes feel uncanny.

1. A new kind of team

From hybrid to multimodal teams

"Hybrid teams" have been widespread since COVID, if not before. Sometimes it is simply a mixture of office and home office work. Sometimes the term is also extended to international teams. It is now normal for people to work in different locations, in different time zones, from different cultures, and at different times. We have become accustomed to this, even if it is sometimes difficult to manage hybrid teams and prevent cultural differences from becoming disruptive factors.

Team management is currently becoming even more challenging. Teams are no longer just hybrid, but increasingly becoming multimodal. What is being investigated in organizational research laboratories around the world under the term "Human-AI Teams" (HAITs) is a qualitatively different form of collaboration. AI systems are no longer just tools. They act as active team members who take on their own tasks, influence decisions – sometimes even making and implementing them themselves – and thus fundamentally change processes.

The key difference to previous software lies in what is known as "agency." This term encompasses a mixture of "capacity to act," "autonomy," and "effectiveness." What does that mean? A classic analysis tool waits until a human being operates it. A modern AI agent plans independently, delegates subtasks, learns from feedback, and communicates its interim results – structurally speaking, it behaves like a team member (Lou et al., 2025).

What is an AI agent - and how does it differ from an AI application?

An AI application executes what a human being instructs it to do. An AI agent plans, decides, and acts independently toward a goal. It can orchestrate multi-step processes, use tools, evaluate interim results, and iterate. Modern agents are based on Large Language Models (LLMs) and are capable of performing tasks that until recently were reserved exclusively for highly qualified specialists (Lou et al., 2025).

Multimodal teams are therefore constellations in which competence, attention, and responsibility are distributed among human and non-human actors. The term is deliberately chosen: "multimodal" does not refer to technology, but to a fundamentally new team architecture.

A real-world example: GitHub Copilot and the beginning of the lonely developer

One of the best-documented examples of multimodal teams comes from software development. GitHub Copilot, an AI system that generates code suggestions in real time, has established a new division of labor in large development teams worldwide: humans define intentions, formulate architectures, review, and make decisions. The AI agent writes, tests, and makes suggestions. This allows pair programming to be implemented with a single development engineer.

A study by Peng and colleagues (2023) showed that experienced developers integrated AI-generated code much more effectively than newcomers to the profession – meaning that the synergistic effect depends heavily on the human expertise that evaluates and filters the AI output. This finding is symptomatic of a broader insight: multimodal teams do not automatically realize their potential, but only under certain conditions that depend on humans.

The more effective such AI assistants become, the more an individual can achieve with their help. The scenario of a single developer orchestrating a multitude of coding agents is a real one. In this case, the human side of the team shrinks to this one person. This certainly poses a risk of loneliness.

2. The promise: Why it can work

Complementarity as a core principle

Why should teams with AI be better than teams without? The intuitive answer, "because AI can calculate more and faster," falls short. The deeper reason is structural: humans and AI agents are complementary. They are good at different things, and this diversity can be productive.

Humans bring contextual understanding, implicit experiential knowledge, moral judgment, and emotional intelligence. AI agents can process large amounts of data, compensate for information asymmetries within the team, be consistently available, and operate without fatigue (Zercher et al., 2025). The strength of the multimodal team lies precisely in this tension: what one lacks, the other provides.

Berretta and colleagues from Ruhr University Bochum summarize it precisely: The basic prerequisites for functioning human-AI synergies lie in four conditions.

- AI capabilities and design that fit the team

- AI literacy and competence on the part of humans

- Appropriate processes at the team level

- Shared mental models and situational awareness

The human part must be able to understand and anticipate the behavior of AI. Only then can real synergies emerge – and not mere coexistence. This can create trust among each other and in systems (Berretta et al., 2023).

Decision quality in practice

Perhaps the most convincing evidence for the promise of multimodal teams comes from medicine. Radiology teams that have integrated AI-supported diagnostic systems into their diagnostic processes consistently show better results than purely human teams in several studies: fewer overlooked findings, faster decision times, less variance between reviewers.

The key point is that AI alone does not make better decisions. Nor do humans alone. Rather, it is humans who know when they can trust AI suggestions – and when they cannot (Zercher et al., 2025). This is a paradigm shift. Competence is shifting from technical expertise to meta-competence – the ability to cooperate with AI partners.

In its latest Skill Partnerships Report, McKinsey calculated that, in a medium adoption scenario, AI-powered agents and robots could unlock around $2.9 trillion in economic value per year in the US alone by 2030 – but only if organizations prepare their employees and redesign entire workflows instead of just automating individual tasks (McKinsey, 2025).

3. The downside: What is at stake

The motivation paradox

In the spring of 2025, a research group led by Jialin Li published a study in Scientific Reports that attracted considerable attention in the research community. The key message was as clear as it was disturbing: human-AI collaboration improves immediate task performance – but it undermines the intrinsic motivation of employees (Li et al., 2025).

The explanation is psychologically plausible: when AI takes over the analytical, creative, and challenging parts of a task – precisely those parts that humans find meaningful – the work loses its intrinsic motivational power. The result is correct, the process is more efficient, but the experience is poorer. In the long run, this jeopardizes engagement – perhaps not in the next quarter, but in the next year.

Isolation, exhaustion, redundancy

Another finding from recent research deserves special attention: AI collaboration can increase social isolation. When employees interact more with AI than with colleagues, the quality of their working life changes subtly but noticeably. Studies report feelings of loneliness, emotional exhaustion and, in extreme cases, depressive symptoms (Lee et al., 2025).

A long-term Korean study involving 381 employees showed that the introduction of AI can significantly reduce psychological safety within a team – a finding that should be particularly alarming for HR managers, as psychological safety is one of the most robust predictors of team performance (Lee et al., 2025).

More work despite increased efficiency

Ranganathan and Ye warn that what appears to be higher productivity can mask a creeping increase in workload, fatigue, weakened decision-making ability, and ultimately burnout or turnover (Ranganathan & Ye, 2026).

In an eight-month ethnographic study, the authors found that AI made additional work seem accessible, efficient, and even rewarding, encouraging employees to expand their scope of responsibilities rather than replace or eliminate tasks. Productivity initially skyrocketed without a corresponding reduction in workload or working hours, which can lead to the negative consequences described above.

4 Humans and AI in a team

Let's return to the meeting at the beginning.

Hori – Strategy Agent – has done its job. The analyses are available, the scenarios have been played out, and the decision template is ready. The team discusses.

And then something happens that is beyond Hori's capabilities: one of the participants asks, "Have we actually considered the impact on our team in Asia? They are going through a difficult phase right now." Another says, "I think we are underestimating how this decision will be perceived by our customers – emotionally, I mean." The group is silent for a moment. Then a conversation begins that no AI can anticipate.

It is precisely this change of perspective and the associated mindset that makes a difference. AI is not threatening per se, but it is also not harmless per se. AI can do many things on its own. And what remains is the most difficult and valuable thing, namely the human aspect: judgment, empathy, responsibility, meaning.

For managers and HR professionals, a clear agenda emerges when teams and AI grow closer together:

- Multimodal teams are not a thing of the future, but of the present – those who ignore them will lose out.

- Technology alone does not determine success or failure, but rather the quality of human leadership, support, and design.

- The most important investment is not in better AI tools, but in people who know how to work with them and who still know what they can do without them.

- Teams need support at the start, in developing their own AI-integrating "way of working," and at other important milestones.

The team we knew no longer exists. The team that can emerge could be better than anything we have known before. But only if we consciously shape it.

References and further reading

Berretta, S., Tausch, A., Ontrup, G., Gilles, B., Peifer, C. & Kluge, A. (2023). Defining human-AI teaming the human-centered way: a scoping review and network analysis. Frontiers in Artificial Intelligence, 6. https://doi.org/10.3389/frai.2023.1250725

Lee, S. et al. (2025). The dark side of artificial intelligence adoption: linking AI adoption to employee depression via psychological safety and ethical leadership. Humanities and Social Sciences Communications. https://www.nature.com/articles/s41599-025-05040-2

Li, J. et al. (2025). Human-generative AI collaboration enhances task performance but undermines human’s intrinsic motivation. Scientific Reports. https://www.nature.com/articles/s41598-025-98385-2

Lou, B. et al. (2025). Unraveling Human–AI Teaming: A Review and Outlook. arXiv preprint. https://arxiv.org/abs/2504.05755

McKinsey Global Institute (2025). Agents, robots, and us: Skill partnerships in the age of AI. https://www.mckinsey.com/mgi/our-research/agents-robots-and-us-skill-partnerships-in-the-age-of-ai#/.

Peng, S. et al. (2023). The Impact of AI on Developer Productivity: Evidence from GitHub Copilot. https://doi.org/10.48550/arXiv.2302.06590.

Zercher, F. et al. (2025). How Can Teams Benefit From AI Team Members? Journal of Organizational Behavior. https://doi.org/10.1002/job.2898

You might also like

-

Forget team coaching if the setup isn't right!

- Written by ETB - Empowerment Team Berlin

- Published on

-

Change Theater?

- Written by Uwe Weinreich

- Published on

-

7+1 Elements of High-Performance Teamwork

- Written by ETB - Empowerment Team Berlin

- Published on

-

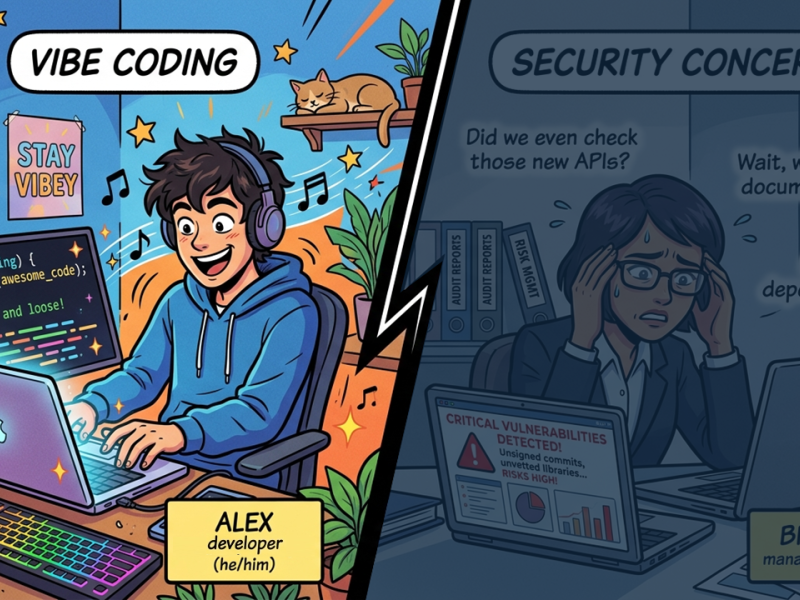

Vibe Coding? Sure, But Strategically!

- Written by Uwe Weinreich

- Published on

-

The Automation Paradox

- Written by Uwe Weinreich

- Published on