Posted in Leadership, Teams, Artificial Intelligence.

How to Lead When Part of Your Team Can No Longer Be Led?

Written by ETB - Empowerment Team Berlin on .

Future teams consist of humans and AI agents. The latter are known for being know-it-alls and unmanageable.

A Serious Business Problem

Tom is chairing the department meeting himself this time. The topic is too important. One of the industrial equipment company's most important customers has written a major complaint directly to the CEO. This needs to be resolved. Marie wants to see a proposed course of action in two hours.

Nicola reports in a desperate voice that she had elaborated everything to the best of her knowledge and belief before issuing the faulty mandate to the on-site service team. She even conducted an intensive session with Collab Operaitor, the central agentic system for operational decisions. The order was refined and essentially confirmed through this process. Although ‘autonomous predictive maintenance’ had been agreed upon, in this case the customer felt that it constituted unjustified interference in their own operations.

So another player is involved. Tom decides to actively include Collab Operaitor and invites the system into the meeting. After a brief description of the situation and an even briefer thinking time – the monitor shows 26 seconds – the system responds: "After thorough analysis, I have concluded that the order developed by Nicola and myself is entirely correct. It corresponds exactly to the parameters of the order from 6 months ago and, through the machine upgrade, will enable the customer to work 13.2% more productively than before. My proposed course of action: A clarifying conversation with the customer."

Everyone in the room shakes their head. The system has overlooked an important factor that – admittedly – the team was also unaware of before the complaint.

Having to lead human-AI teams comes slowly, but we should be preparing intensively for it right now.

In the previous article, we examined in depth the changes brought about by HAITs (Human-AI Teams) and reviewed the current state of research.

In this article, we broaden our perspective to the implications for leading hybrid, multimodal teams and will once again look at what research has to say on the matter.

1. Leading Multimodal Human-AI Teams (HAITs)

When the Team Consists of Humans and Machines

Leadership has always been a human phenomenon: The Center for Creative Leadership defines leadership as "a social process that enables individuals to achieve results together that they could never accomplish alone." This doesn't change when AI agents enter the picture. But the conditions under which this social process takes place are changing fundamentally.

A meta-analysis of 116 studies from 2022 to 2025 identifies the central balancing act for leaders between technological innovation and human-centered practices. The greatest challenges are not technical in nature – they are cultural and psychological (Albannai & Raziq, 2025):

- Building trust,

- dealing with algorithmic bias, and

- the question of who bears responsibility when an AI has made a decision.

AI Literacy and Bilingual Leadership: The New Core Competencies

Vegard Kolbjørnsrud from the BI Norwegian Business School coined the term "Bilingual Leadership" for this. Leaders in intelligent organizations must be "bilingual": They must understand people – their emotions, motivations, resistance – and at the same time be able to assess technical systems: What can the agent do, what can it not do, how reliable are its outputs, and where are the limits of its judgment? (Kolbjørnsrud, 2024). In practice, this requires not only knowing the strengths and weaknesses of people and systems precisely, but also designing the organization and work accordingly.

Leadership therefore doesn't begin only in the interaction with existing HAITs, but already before that, when the foundations for work processes are being laid. Task design, team design, and organizational design become additional requirements for leaders – ideally supported by specialized HR professionals.

Then things get interesting. Bilingual leadership is a leadership style in which managers possess both strong social competencies and technical knowledge, enabling them to communicate with and understand both human and digital colleagues.

This sounds like an enormous demand. And it is, because it means:

- Shifting from "command" to context: Instead of traditional command-and-control structures, leaders create an organizational context and "operational charters" (values and decision rights) that enable teams to successfully navigate AI disruptions.

- Framing and goal-setting: Leaders set ambitious goals and narratives that machines cannot develop. In addition to hard facts, they benefit from being able to read the mood in the room and interpret emotional reactions.

- Strengthening judgment and critical thinking: AI-generated pattern recognition and predictions must be combined with human ethical judgment and holistic thinking to make complex "tough decisions" when values conflict.

- Mutual learning: Leaders foster environments where humans and machines train each other to improve performance.

- Intelligent interrogation: Leaders must know how to effectively question – even interrogate – AI systems to validate their results and reasoning.

This requires a number of prerequisites:

- AI literacy and technical knowledge: Leaders can no longer ignore technology or delegate technical decisions. They must understand what digital actors can accomplish and which problems they are best suited to solve.

- Cultivating human strengths: Empathy, wisdom, trust-building, and ethical judgment are critically important, as these are areas where humans have an irreplaceable competitive advantage over AI.

- Growth mindset: Working with the awareness that we can improve together is necessary to enable collaborative, exploratory learning with digital actors.

- Fusion skills: These are specific skills that serve to combine the strengths of humans and machines, e.g., knowing how to effectively divide work between human and digital actors.

- Self-reflection: Leaders must invest time in reflection and "inner work," for example through coaching, to understand what success means for themselves and the long-term viability of their organization.

The findings of Sternfels and colleagues show: Leaders who excelled in classical virtues – decisiveness, clarity, giving direction – have a strong starting position. Because these qualities are not becoming obsolete. They are becoming more important. Because while AI can condense data and calculate scenarios, it cannot decide what truly matters.

"CEOs and other C-suite leaders will not always be the smartest people in the room. As a result, traditional command-and-control approaches are likely to fall flat. It will be much more important, instead, for these leaders to create the context in which their teams can successfully navigate AI-informed process changes, role changes, and other internal and external business disruptions"

Operational Charters Connect and Bind People and Systems

Framing and goal-setting represent an increasingly important factor in day-to-day leadership. Uwe Weinreich sees a great opportunity in designing meaningful collaboration between humans and AI systems by granting both systems the greatest possible freedom to shape their own work (Weinreich, 2026). This allows both to contribute their very specific skills to the best of their abilities.

To keep everything moving in the right direction and, above all, from getting out of hand, clear operational charters are defined. They establish the direction of development – meaning goals, measurable success criteria, timeframes, and the like – but also clear boundaries for action. When humans or AI systems reach such a boundary, they are required to coordinate the next steps with their leader, sometimes even with senior management.

The advantage of the action framework concept lies clearly in the fact that comparable rules apply to humans and machines, and routines for edge cases are defined. This enables largely smooth operations, though it requires considerable effort in the design phase.

Responsibility Remains Human

One of the most important principles in dealing with multimodal teams – and one that enjoys broad consensus in leadership research – is: Accountability remains with humans. AI agents can prepare, influence, and execute decisions. But the moral and organizational responsibility for outcomes lies with humans.

This must not remain mere rhetoric but must become a lived structural necessity. Researchers from the University of Murcia describe how leaders in human-AI teams are compelled to develop new roles: as interpreters of algorithmic data, as empathetic mediators of automated decisions, as ethical authorities in ambiguous situations (Zárate-Torres et al., 2025). No AI fills these roles. And it should not take them on.

2. What Teams and Organizations Need

Anchoring AI Literacy as a New Core Competency

This has already been stated for the individual level and applies equally at the structural level: Nothing works without AI literacy. Multimodal teams of humans and AI agents do not function on their own. They need conditions. The first and most fundamental is AI literacy – not in the sense of programming skills, but of the ability to act competently when working with AI systems.

A study on "AI Literacy" in human-AI teams shows: Teams whose members can critically evaluate AI outputs, name limitations, and calibrate trust situationally achieve significantly better results than teams that either adopt AI uncritically or distrust it wholesale (Pan et al., 2025). AI literacy is the decisive moderator between the promise of multimodal teams and their actual performance.

Therefore, AI literacy should be anchored as a building block within the organization, as a recurring seminar module, as a topic in meetings, and in day-to-day leadership.

The impetus to invest here exists. 77% of employers surveyed in the World Economic Forum's Future of Jobs Report (2025) indicated plans for significant investments in reskilling and upskilling by 2030 – with a particular focus on AI literacy, critical thinking, and collaborative skills. The need has been recognized. Implementation is lagging behind.

The Danger of Over-Automation

A warning that repeatedly appears in the research literature deserves special attention: Organizations risk eroding competencies when AI takes over too much. The human brain learns new things and dismantles old structures that are no longer used.

A quick self-test:

How good are you still at mental arithmetic? Let's just try a simple task:

7 * 13 = ?

How long does it take you?

To maintain core competencies, it makes sense to conduct monthly "exercises without AI" so that teams can preserve and further develop them.

This sounds trivial, but it is not. If the AI agent goes down tomorrow, we are left empty-handed. Organizational resilience requires that people not only excel with AI but also remain capable of acting without it.

Continuous Calibration: AI Is Not a One-Time Implementation

A technical finding with significant organizational consequences comes from Industry 5.0 research: AI systems must not simply be deployed once and then forgotten. They must be continuously adapted to the team – to changing tasks, new members, different contexts. What researchers call "Late Shaping" is, in practice, a permanent management task (Krause et al., 2024).

Particularly relevant: Updates intended to improve AI performance can actually worsen team performance if people are not brought along. This fundamentally changes the relevance of collaboration between IT departments and HR.

AI is no longer a tool that you introduce and maintain. It is a team member that evolves – and that must be actively led. With all the positive and negative consequences that entails.

Let us summarize. There are several indispensable elements that organizations must provide:

- Cultivating inherently human strengths – both in leaders themselves and as a leadership task toward teams

- AI literacy – deeper understanding and appropriate engagement with AI

- Critical thinking – as a safeguard against all-too-convincingly presented but flawed AI outputs

- Accountability – humans as the ultimately responsible actors must also be granted that role.

- Action frameworks – they set the boundaries of the playing field for humans and machines, making complexity and responsibility livable.

3. What Will the Future Look Like?

The "Frontier Firm" as a New Organizational Model

Microsoft, in its Work Trend Index 2025 – based on data from 31,000 workers in 31 countries – described a new organizational model: the "Frontier Firm." Its key characteristics are hybrid teams of humans and agents, on-demand intelligence instead of fixed structures, and the ability to scale with AI without proportionally increasing headcount. This is also the expectation of those surveyed: 81% of leaders expect AI agents to be moderately to extensively integrated into their business operations in the medium term (Microsoft, 2025).

What does this look like in practice? In a startup in the Microsoft sample, the company chose not to appoint an experienced marketing professional to the CMO position. Instead, a junior marketing manager was given AI tools with which she independently managed complete campaigns.

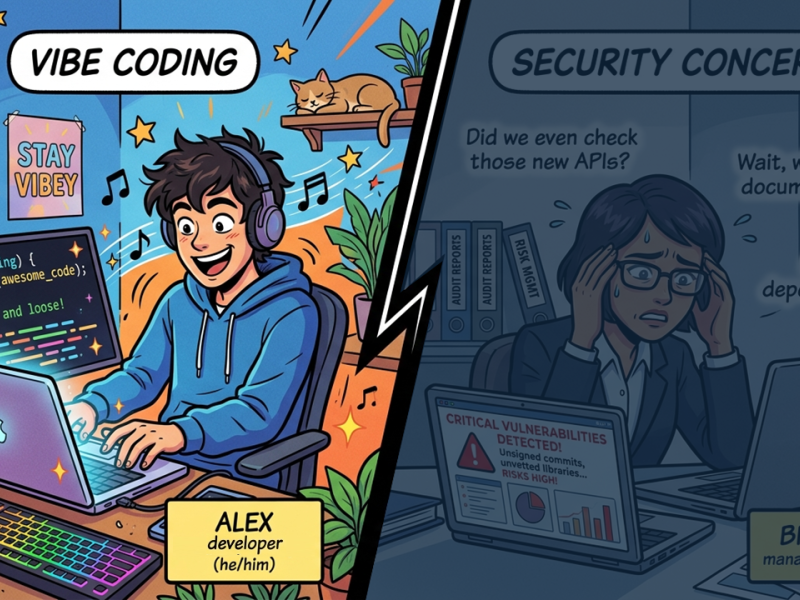

An even more extreme and highly current example is OpenClaw, an agent system of enormous significance, developed by a single Austrian developer (Peter Steinberger) – by intelligently connecting systems and having the coding largely done through AI systems, so-called vibe coding. This has also led to OpenClaw containing major security vulnerabilities.

All of this means for leadership:

- Even career starters in Frontier Firms effectively become managers from day one, because they lead AI agents.

- The work scope of employees shifts from team members to individually and CEO-like working individuals who lead an entire arsenal of agents.

- Both of these demand more and more responsibility and shaping of ethics, strategy, governance, and concrete operational charters at the highest level. Only in this way can responsibility remain bearable.

Org Charts Become Work Charts

Both Brian Solis and Uwe Weinreich describe one of the most radical predictions for corporate structure: AI co-designs organizations – at least in part. Organizations become fluid. Static org charts give way to dynamic "work charts" – outcome-oriented teams of humans and agents that come together for specific tasks and dissolve afterward. Employees no longer build linear career paths but portfolios of completed missions (Solis, 2025; Weinreich, 2026).

The World Economic Forum supports this direction: "The organizational embedding of an agentic workforce will expand the management horizon – the tasks to be completed will be carried out by a hybrid workforce of machines and humans, while leadership responsibility for outcomes, risks, and rewards remains with humans" (WEF, 2026).

Leading Means (Also) Setting the Right Frameworks

Let's look once more at the opening scenario and the hybrid human-AI decision that so angered the important customer.

Tom reminds the assembled team that what matters most right now is not looking for someone to blame, but finding solutions that align with the company's values. Nicola breathes a sigh of relief. Elif speaks up: "I think we've stumbled upon a problem in the operational charter that we need to solve. The human side of our customers is insufficiently represented or considered in the systems."

Strong agreement. A creative discussion begins about how the definitions can be revised. An achievement that only humans can deliver.

Top leaders bear great responsibility. The complexity of possible solutions emerging from multimodal teams is so vast that control is no longer the appropriate method for risk minimization. Ethics, governance, organizational design, operational charters for humans and systems, and an investment in cultivating both, human core competencies and personal responsibility are essential.

Leadership is becoming even more demanding – and precisely because it must now also encompass technical AI agents, it is more necessary than ever that leaders are given the opportunity to develop their own and their team's human strengths.

References and Further Reading

Albannai, N. A. A., & Raziq, M. M. (2025). Navigating ethical, human-centric leadership in AI-driven organizations: a thematic literature review. The Service Industries Journal, 1–28. https://doi.org/10.1080/02642069.2025.2534360

Kolbjørnsrud, V. (2024). Designing the Intelligent Organization: Six Principles for Human-AI Collaboration. California Management Review / SAGE. https://doi.org/10.1177/00081256231211020

Krause, F. et al. (2024). Managing human-AI collaborations within Industry 5.0 scenarios via knowledge graphs. Frontiers in Artificial Intelligence, 7. https://doi.org/10.3389/frai.2024.1247712

Microsoft (2025). 2025: The Year the Frontier Firm is Born. Work Trend Index. https://www.microsoft.com/en-us/worklab/work-trend-index/2025-the-year-the-frontier-firm-is-born

Pan Z. et al. (2025). AI literacy and trust: A multi-method study of Human-GAI team collaboration, Computers in Human Behavior: Artificial Humans, Volume 4, 2025, 100162, https://doi.org/10.1016/j.chbah.2025.100162.

Solis, B. (2025). 7 Ways AI Will Change the Future of Work by 2030. ServiceNow / briansolis.com.

Sternfels B. et al (2026). Developing human leadership in the age of AI. https://www.mckinsey.com/capabilities/strategy-and-corporate-finance/our-insights/building-leaders-in-the-age-of-ai. McKinsey & Company

Weinreich, U. (2026). Menschen. Unternehmen. KI. Zukunft gestalten in einer Wirtschaft im Umbruch. Stuttgart. Schäffer Poeschel.

World Economic Forum (2025/2026). Future of Jobs Report 2025; Future of Work Decision-Maker Perspectives. https://www.weforum.org

Zárate-Torres, R. et al. (2025). Influence of Leadership on Human–Artificial Intelligence Collaboration. https://pmc.ncbi.nlm.nih.gov/articles/PMC12292626/

Tagged

You might also like

-

The team as we knew it no longer exists

- Written by ETB - Empowerment Team Berlin

- Published on

-

The Automation Paradox

- Written by Uwe Weinreich

- Published on

-

Vibe Coding? Sure, But Strategically!

- Written by Uwe Weinreich

- Published on

-

Forget team coaching if the setup isn't right!

- Written by ETB - Empowerment Team Berlin

- Published on

-

Change Theater?

- Written by Uwe Weinreich

- Published on